User-Generated Software is User-Generated Content

Software abundance and platform governance

The phrase “user-generated content” (UGC) acquired its bureaucratic weight in the mid-2000s, after years of people doing the activity first and policy arriving later. The OECD report that canonized the term treated it as a supply condition: content made publicly available, containing some creative effort, produced outside professional routines.

UGC required governance. Publication implies distribution. Creative effort implies variability and conflict about what counts as “good” or “allowed.” Production outside professional routines implies that the prior institutional scaffolding of accountability and competence is absent, or arrives late, or arrives indirectly through platforms, norms, and enforcement. The most important sentence in that report is not the definition itself, but the surrounding acknowledgment that the category has no fixed border and evolves as monetization, professionalism, and platform incentives blur the old line between “user” and “producer.”

This primary claim of this essay is: User-generated software names a structural shift in how software enters the world: from authored artifacts produced under professional scarcity to moderated streams produced under generative abundance.

Historically, when the cost of producing a medium collapses, institutions stop organizing around authorship and begin organizing around moderation, distribution, and execution control. Software resisted this pattern only because code remained expensive to produce and integrate. Vibe coding collapses that barrier, forcing institutions to govern software the way they already govern user-generated content.

MUDdy Histories of Abundant Media

In the early 1990s, at Xerox PARC, Pavel Curtis ran LambdaMOO, a text-based virtual world whose users built rooms and objects and, crucially, wrote scripts that made those objects do things. Curtis described the place as a “MOO,” a kind of MUD where object-oriented programming was available to ordinary participants. The result was not “content” in the later Web sense. A better descriptor is executable participation. It resembled a game because it had a world and an audience. It resembled an operating environment because it had permissions, capabilities, and shared resources that could be consumed, damaged, or seized. Accounts could create artifacts that acted on other people’s experience. Social conflict manifested as code, and code manifested as social conflict.

A few people learned quickly that scriptable objects are power, and that power needs boundaries even when nobody wants to say the word “authority” out loud. LambdaMOO developed “wizard” roles, privileges, and practices that look, in retrospect, like early platform operations: escalation paths, access control, logging, sanction, and a rough distinction between content disputes and system disputes. When the infamous 1993 “Mr. Bungle” incident occurred, it later became shorthand as a moral scandal, but the institutional lesson inside the system was narrower and colder: a capability existed that allowed one actor to impose a state change on others without meaningful consent, and the governance structure lacked legitimacy and tooling to respond. The post-incident “petition” and ensuing debates were arguments about who had the right to change the rules and how that right could be exercised.

It is tempting to treat this as ancient lore, a curiosity from before broadband and smartphones, but the structure repeats. When participation becomes easy and the medium becomes abundant, labor moves away from making the medium and toward filtering, administering, and controlling what the medium can do when it meets the world. Clay Shirky, writing in 2002 about broadcast institutions and online community, made the operational inversion explicit. Broadcast relies on “filter, then publish.” Communities are “publish, then filter.” When the pipeline flips, the institution’s center of gravity moves with it. Editors and gatekeepers stop being the primary locus of control. Moderators, ranking systems, enforcement mechanisms, and interface constraints take over, sometimes slowly, sometimes as a late-night incident response exercise that becomes permanent policy the next morning.

The OECD report is full of managerial tells if read as an operations document rather than a cultural one. It spends pages on “drivers,” “value chains,” “monetisation,” and “opportunities and challenges.” It is describing the moment when UGC stops being a sociology story about amateurs and becomes an institutional story about infrastructure that has to host, filter, and defend itself. That is why, even in its taxonomy work, it cannot avoid touching open-source software and virtual worlds, even if they sit awkwardly beside blogs and video sharing. The earlier internet already contained software-like participation; it just did not yet have a name that made it legible to people who did not live inside it.

A useful way to keep the discussion honest is to resist the story that software is categorically different from media because it is “functional.” Plenty of media is functional. Instructions, recipes, forms, spreadsheets, checklists, and training videos all function, and institutions govern them. The more relevant distinction is executability. Some forms of participation can change state inside a system. Others merely represent something. LambdaMOO blurred that line early: the act of creating a room was representational, while the act of creating a scripted object was executable, yet both were “user-made.” In later decades, the same blur appears in game mods, scripting environments, and plugin ecosystems. A Half-Life mod becomes Counter-Strike, and eventually becomes a Valve-maintained franchise. The legitimacy migrates. The artifact begins as a gift or a hack; the platform later treats it as inventory, then product, then liability. That migration is not a cultural coronation. It is a governance handoff from authorship to administration, from “who made this” to “who is allowed to run this and under what constraints.”

The mod scene is often treated as a prehistory of influencer culture, a story about creativity and fandom, but it is closer to a prehistory of platform operations. Mods are user-generated software in the plain sense: users write code that changes a system’s behavior, then distribute it through channels that may or may not be sanctioned, then negotiate norms and enforcement with the host community and the platform owner. The platform owner eventually learns that the difference between “tolerated intervention” and “suppressed intervention” is rarely about aesthetic taste. It is about whether the intervention threatens the platform’s ability to govern execution and distribution. That is why some platforms tolerate skins and maps while treating automation, bots, and rule-changing scripts as existential, even when both are “creative.” In the mod world, changing the rule set is governance, and platforms develop a nose for it.

What Is User-Generated Software? (a.k.a. UGS)

If the phrase “user-generated software” sounds like rebranding, it helps to name what it is not. It is not “no-code,” which is typically about reducing technical barriers inside a bounded enterprise environment where legitimacy is already pre-allocated by org charts and access management. It is not “citizen development,” which frames amateurs as latent staff and assumes the firm is the unit that absorbs and legitimizes their work. It is not a claim that more people will become competent engineers. It is a claim about how legitimacy is assigned.

User-generated software is software whose legitimacy is externalized to the platform that hosts it. In that framing, the author’s status recedes as a control point, and the platform’s governance mechanisms become the center of operational reality. This is the same move that happened with user-generated media: authorship mattered culturally, yet the operational problem was distribution, moderation, enforcement, and the ability to set boundaries when “publish, then filter” became the default order of events.

Yochai Benkler’s early-2000s work on commons-based peer production is often cited as an economic argument about why open source exists. Read operationally, it is an integration argument. Peer production works when integration and quality control are solved through a combination of platform-embedded technical mechanisms, social norms, and some limited reintroduction of hierarchy or market mechanisms for integration itself. The vulnerabilities are visible in his language: the system must defend against incompetent or malicious contributions; integration is the central limiting factor; governance arises from the need to combine modules and police their integrity. That is a vocabulary that aligns with modern CI pipelines, package registries, moderation queues, and trust-and-safety tooling more than it aligns with the romantic story of volunteers writing code for fun.

The boundary of user-generated software becomes clearer when traced through cases that organizations already live with but do not usually name as software production. Excel macros are the canonical example because they look innocuous and familiar. A macro begins as a local convenience, written by someone solving their own problem inside a spreadsheet that already has institutional legitimacy. Over time, the macro accretes users, assumptions, and side effects. It pulls data from external systems, encodes business rules, and quietly becomes executable logic that affects decisions. At no point does it pass through a formal software lifecycle, yet it behaves like software in every operationally relevant sense. When it breaks, the question is no longer who wrote it, but whether it can be traced, bounded, audited, or retired. The spreadsheet becomes a host environment, and the macro becomes user-generated software whose legitimacy derives from continued tolerance rather than formal approval.

Salesforce workflows and similar SaaS-native automation tools extend this pattern. They are marketed as configuration rather than programming, yet they encode branching logic, state transitions, and enforcement of policy. A workflow created by a sales manager can block orders, trigger downstream actions, or leak data, depending on how it is composed. The platform legitimizes the action by making it available through an interface, but the enterprise bears the consequences of its execution. Governance emerges not at the point of creation but at the point where the workflow intersects with revenue recognition, compliance, or customer experience. The organization eventually responds with naming conventions, approval processes, sandboxes, and limits on who can deploy what where. The pattern is familiar: production is cheap, evaluation is expensive, and legitimacy migrates to the platform’s execution controls.

Browser extensions and internal scripts sit at the edge of the enterprise but follow the same logic. An extension that scrapes data, injects UI changes, or automates form submission is executable participation in an external system. An internal script that pulls from APIs and produces reports becomes a dependency the moment others rely on it. Plugin marketplaces formalize this drift. They invite users to extend a core system, then gradually introduce review, signing, revocation, and monetization. The marketplace becomes the site of legitimacy, and the plugin becomes inventory. In each case, the question that matters is not whether the artifact was authored by a professional, but whether the host environment can see it, constrain it, and survive it. That is the mechanical boundary of user-generated software.

A Senior VP of Operations has likely lived through at least one wave of user-generated media inside a corporate perimeter, even if it did not arrive under that name. A discussion forum for customers becomes a support channel, then becomes a reputation risk, then becomes a moderation and escalation workload. A wiki becomes knowledge management, then becomes contested, then becomes subject to permissions, retention, and audit. An internal dashboard that started as “self-serve” becomes a decision dependency, then becomes a production system whose definition of truth is debated, then becomes governed because it has to be. The institutional pattern is stable. Production becomes cheap. Variation and conflict increase. The organization reallocates labor from creation to evaluation. Governance emerges as a way to survive abundance rather than a way to restore scarcity.

Software’s historical exception as UGC came from scarcity. Writing production code required trained labor, specialized tooling, and integration work that was not separable from professional routines. Even when a user could write code, the code did not easily enter the organization’s execution pathways. It could live in a spreadsheet macro, a script, a side database, or an unofficial automation, but it rarely became a mainstream input into the firm’s core systems without passing through professional choke points. The choke points were not only cultural. They were technical and procedural. Deployment, access control, change management, and the privilege to run code in production environments acted as scarcity filters. “UGC does not include software” was therefore a reasonable mental model for a long time, because the cost of producing code that mattered inside institutional systems was high enough that the code looked like authored artifacts, even when it originated from users.

Then the scarcity barrier moved.

The Software Feed

The first visible changes were subtle and operational rather than ideological. In large enterprises that adopted GitHub Copilot and adjacent tools around 2021, the immediate effect was not a sudden collapse of software quality and not a sudden acceleration to magical productivity. The felt effect was a change in the local ratio of writing time to reviewing time. Code appeared faster. The code was plausible. It often compiled. It often looked stylistically consistent. It also carried small, hard-to-detect errors: regressions, missing edge cases, stale assumptions, security footguns, unnecessary dependencies, and a tendency to fabricate confidence in unfamiliar libraries. The most expensive part of software, which was never typing, became the bottleneck more visibly: evaluation, verification, and ongoing comprehension. The tool reduced the price of producing candidate artifacts. It did not reduce the cost of deciding what a candidate artifact would do in a particular environment, with particular data, under particular constraints, with a particular failure budget. The operational texture resembled a content platform more than a software shop: a stream of plausible contributions that required filtering, ranking, review, and enforcement.

This is where “vibe coding” comes into play. It captures the idea that the dominant interface becomes natural language and intention rather than the codebase itself, and that the output becomes a flow of candidates rather than a single authored artifact. When a medium becomes abundant, the critical governance question shifts away from production and toward what gets to circulate and what gets to execute. Shirky’s inversion—publish then filter—stops being a media story and becomes a software story; the codebase begins to behave like a feed.

Platforms script participation through interfaces, defaults, metrics, and affordances. In vibe coding environments, the interface scripts programming. The model proposes what to do next; the developer’s role drifts toward selection, rejection, and supervision; the system’s momentum nudges toward whatever path is easiest to express in prompt form. This is not a moral claim about human laziness. It is a description of a workflow that makes some actions cheap and others expensive. When cheapness moves, practice moves with it.

There is a single analogy worth using once and then discarding. Digital cameras did not destroy photography by making images easier to produce. They changed the unit of production from a curated set of photographs to an abundant archive, and then platforms like Instagram turned that archive into a stream governed by filters, feeds, and enforcement layers. The governance layer became the differentiator because the medium became abundant. What mattered was not the ability to take a photo; it was what got seen, how it was ranked, what was removed, what was monetized, and what was permitted to circulate. Vibe coding does the analogous thing to software production without making software comprehension cheap. The result is a new gap: a widening delta between the rate at which candidates can be produced and the rate at which they can be evaluated responsibly.

The unsettling dynamic that appears in abundant media ecosystems is factory farming. When content production is abundant and cheap, the ecosystem incentivizes high-volume, low-accountability output that is optimized for visibility and engagement under platform rules. Software has a version of this dynamic when generation becomes cheap: internal teams and external vendors can produce large volumes of code-like artifacts, plugins, automations, scripts, and microservices that appear useful at first glance, satisfy the immediate local need, and gradually turn into a dense layer of unowned execution. If this sounds abstract, it already has familiar forms: the sprawl of unofficial dashboards, the proliferation of internal bots, the accretion of brittle glue code, the one-off scripts that become permanent dependencies. The change is that the marginal cost of producing these artifacts approaches the cost of describing them, while the integration and lifecycle costs remain stubborn. That is the point where software begins to enter the world as a moderated stream rather than an authored artifact.

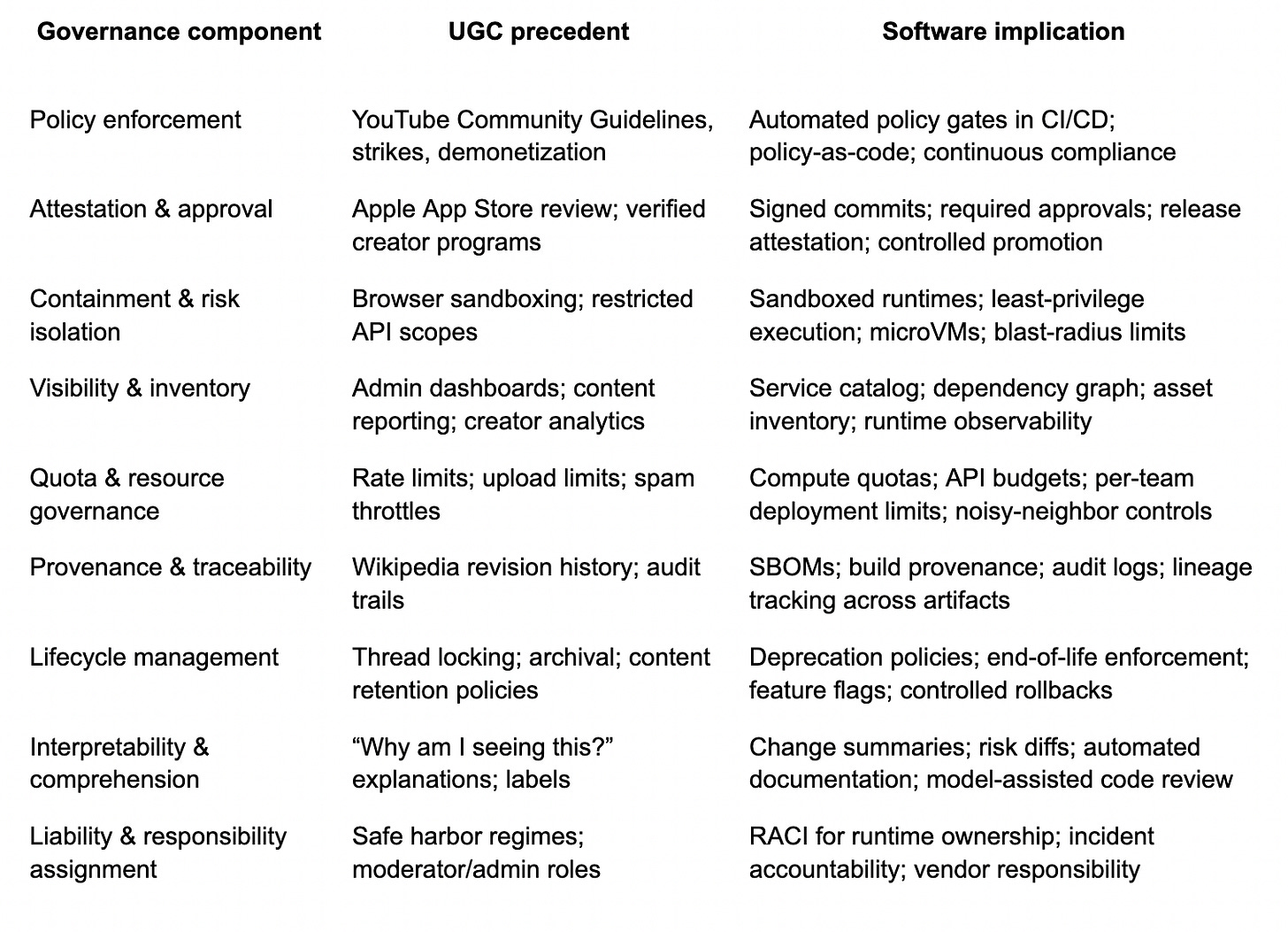

Institutions rarely respond to this by trying to restore scarcity. Scarcity restoration is expensive and politically fragile. The more durable move is governance. In user-generated media, that governance took recognizable forms: community guidelines, moderation queues, ranking systems, content identification, demonetization, strike policies, provenance tooling, and liability allocation. The claim here is that user-generated software triggers reuse of these mechanisms because the underlying operational problem is the same: abundant production with costly evaluation, combined with meaningful externalities when an artifact circulates or executes.

The UGS Governance Stack

A platform does not need to “believe” a piece of content to host it. It needs to decide whether to distribute it and how to bound its effects. A platform does not need to “believe” a piece of software to host it either. It needs to decide whether to allow it to execute, where, with what permissions, under what review regime, with what monitoring, and with what rollback capacity. The governance of execution becomes the analog to the governance of distribution. The older internet learned this in worlds like LambdaMOO because the difference between content and execution was already blurred. Modern enterprises are learning it because that blur is now arriving at scale inside standard development workflows.

The App Store is a useful anchor because it is an early, large-scale example of execution governance for software distributed through a platform. Apple’s review process evolved from a relatively simple gate to an extensive, continuously updated enforcement regime, backed by policy documents, technical checks, and a revocation apparatus that can remove apps after approval. That evolution resembles the evolution of moderation systems on media platforms: the rules accrete, enforcement becomes a hybrid of automation and humans, and legitimacy becomes contextual and revocable rather than permanently earned. In parallel, YouTube’s Content ID system, launched in the late 2000s, is a model of enforcement at scale that does not rely on authorship as the unit of control. It matches, flags, blocks, monetizes, and routes disputes through a platform-defined process. The institutional posture is similar across both cases: abundant submissions are assumed; evaluation is constrained; enforcement is continuous; the platform owns the pipeline.

Lawrence Lessig described the deeper mechanism years earlier in a different context. When rules are enforced by machines rather than humans, code becomes law in the practical sense that constraints become embedded in architecture and backed by legal regimes that treat violations of code-enforced rules as violations of law or policy. He was writing about copyright, DRM, and the way architecture can erase certain freedoms while the law then stabilizes that architecture. Once enforcement sits in the architecture, legitimacy is no longer primarily an attribute of the author. It is an attribute of compliance with the platform’s execution rules.

At this point it becomes possible to say “UGC governance systems” without meaning “trust and safety” as a department. It means a stack of mechanisms that allow an institution to survive abundance. That stack already exists in mature software organizations in partial form, often scattered across security, platform engineering, developer productivity, compliance, and risk functions. What changes under user-generated software is that the stack stops being optional hygiene and becomes the center of how software enters the world.

None of these components are new. The novelty is that the same governance moves that stabilized user-generated media are being imported, piece by piece, into software production because software is beginning to behave like a participative medium whose default state is abundance and whose dangerous edge is execution rather than distribution.

Shirky’s “publish, then filter” line describes the point where content becomes governable through post-publication filtering rather than pre-publication gatekeeping.

In software, the analog is “merge, then contain,” or “deploy, then constrain,” though those phrases are too neat to trust. The real practice is messier. It looks like feature flags, canary releases, runtime policy enforcement, service meshes, identity-aware proxies, and environment constraints that limit what a piece of software can do even after it has entered the system. It also looks like the spread of approval workflows and attestations, the increase in required reviews, the institutionalization of security scanning, and the growth of internal platforms that make “the paved road” the only road that reliably leads to production. These are all governance mechanisms that become central when production is abundant and evaluation is scarce.

Benkler’s integration emphasis becomes newly legible here. Integration is the limiting factor; quality control is defense against incompetent or malicious contributions; technical mechanisms and social norms combine to make peer production viable. Under vibe coding, the peer is not only an open-source contributor. The peer is also the internal analyst who now generates scripts, the product manager who generates an automation, the vendor who generates a connector, the engineer who generates a patch under time pressure, and the model itself proposing candidate changes. The institution becomes a host for heterogeneous contributors whose production rate has increased, while the institution’s integration capacity has not.

The shift is therefore not a story about democratization in the celebratory sense. It is closer to the story the OECD report tells about UGC becoming a new value chain where hosting, searching, aggregating, filtering, and diffusing become central commercial activities.

When software becomes abundant, hosting and filtering of executable artifacts becomes central. That hosting is not GitHub as a repository. It is the enterprise’s internal execution environment: its identity systems, its policy engines, its build pipelines, its runtime platforms, its monitoring systems, its procurement apparatus, its incident response machinery. The value is no longer created only by writing code. It is created by making code governable, legible, and survivable.

Upcoming Reorgs

The outdated mental model treats software as authored artifacts: discrete objects made by professionals, reviewed by professionals, and deployed through controlled pipelines. Under that model, the key organizational questions center on productivity, staffing, delivery cadence, and architectural correctness. A stream ontology forces additional questions that resemble platform governance questions: what gets to run, under what permissions, how it is observed, how it can be removed, how it is traced, and who carries responsibility when it behaves badly.

The institutional response is therefore unlikely to be “novel regulation” in the first instance, even if regulation arrives later. The initial response will look like the reuse of existing governance patterns because those patterns already exist in the organization’s muscle memory. The company already has approval chains for spending; those chains will be adapted to approvals for execution pathways. The company already has compliance controls for data access; those controls will be adapted into runtime policy enforcement. The company already has inventory and asset management practices; those will extend into inventories of services, automations, and dependencies. The company already has incident response; it will become the enforcement backstop for software that is produced faster than it can be fully understood.

Lessig’s “code becomes law” frame, applied without the culture-war baggage, becomes a simple observation about where enforcement sits when scale overwhelms human judgment. YouTube built an enforcement system because the volume of uploads made case-by-case adjudication impossible. App stores built review regimes because the volume of apps and the risk of execution required a gate plus revocation. Enterprises will build and extend execution governance for the same reason: the system has to survive abundant input.

Around the mid-2010s, firms like Netflix and Spotify began to build internal platform teams whose job was to govern execution pathways rather than to build end-user features directly. This rise is sometimes described as “platform engineering,” sometimes as “developer productivity,” sometimes as “paved roads,” sometimes as “internal developer platforms.” These teams treated the organization as a multi-tenant platform where product teams are internal users, and where the primary operational challenge is controlling how software enters production, how it is observed, and how it fails. The old prestige economy of authorship—who writes the clever code—begins to compete with a new prestige economy—who controls the execution environment. In organizations where this shift is far along, the platform team becomes a power center because it owns the constraints.

This regime boxes shadow systems. A company can tolerate unofficial scripts and automations when they live in low-risk environments. The tolerance ends when those scripts become dependencies for decisions, revenue, or regulated processes. Then the institution either kills them, absorbs them, or surrounds them with governance until they become legible enough to live. Absorption looks like formal ownership, documentation, and integration into the platform’s inventory. Boxing looks like sandboxed environments, restricted permissions, quotas, monitoring, and enforced change management. Killing looks like decommissioning and replacing the functionality with a sanctioned system. The important point is that the response pattern resembles content moderation more than it resembles traditional software project management. It is a response to abundance.

Terranova’s “free labor” critique of user-generated content tends to be read as political economy, and it is, but it also maps cleanly to a practical organizational tension: labor shifts from creation to maintenance and evaluation, and that labor becomes less visible because it is distributed across many actors and occurs in the filtering layer rather than in the obvious production layer. The work is the work of reading, triaging, reviewing, and enforcing. In a UGC platform, that labor becomes moderation and trust-and-safety plus algorithmic tuning. In an enterprise, that labor becomes code review, security review, platform governance, incident management, compliance, and the slow work of making systems comprehensible. The institution’s cost center drifts. The staffing model drifts with it. The reporting model often lags, which creates a familiar kind of operational blindness where leadership believes production capacity has increased because output volume has increased, while the organization is quietly accumulating evaluation debt.

A detail from Benkler helps keep this from becoming mere rhetoric. In peer production systems, integration is solved through technical solutions embedded in the platform, norm-based social organization, and limited reintroduction of hierarchy or market mechanisms for integration. Enterprises already do this. They embed technical solutions in platforms: CI gates, infrastructure templates, policy engines. They rely on norms: review expectations, coding standards, on-call cultures. They reintroduce hierarchy: approvals, architecture reviews, risk sign-offs. Under user-generated software, those existing mechanisms stop being “process overhead” and become the practical substrate that allows execution to remain governable as the input stream becomes cheaper to produce.

This is one reason the “AI safety” framing often feels misplaced when viewed from operations. AI safety discourse tends to focus on model behavior as a singular object. User-generated software is a pipeline phenomenon. The operational risk comes less from any one generated snippet and more from the system’s capacity to ingest and execute a larger volume of partially understood change. The institution’s response therefore gravitates toward pipeline controls: provenance, attestation, gating, containment, visibility, quotas, lifecycle management. These are not new. They are the inheritance of UGC governance mechanisms translated from distribution control to execution control.

There is also a less discussed consequence: legitimacy shifts away from the artifact itself. In older software mental models, legitimacy is earned through authorship, review, and a kind of artisanal narrative about how the code came to be. In abundant media systems, legitimacy is earned through platform status, compliance, and ongoing behavior under enforcement regimes. An account is trusted because it has history, verification, and adherence to rules. A piece of content is trusted because it is ranked, labeled, contextualized, or endorsed within the platform’s epistemic machinery. Software under vibe coding drifts toward the same condition. A service is trusted because it is in inventory, has owners, has observability, passes policy, has provenance, and is bounded by containment. The code may be unreadable. The system remains governable if execution is constrained and behavior is visible.

This return to visibility is where Nancy Baym’s work on relational scaffolding becomes unexpectedly relevant. Communities sustain when relational context exists: continuity, recognition, norms, and shared maintenance work. Platforms build interaction scaffolding because raw posting tools plateau without relational maintenance. The enterprise analog is that raw code generation tools plateau without governance scaffolding. The organization may generate more artifacts than it can relate to, understand, and maintain. The maintenance relationship is not sentimental. It is the practical continuity that allows an institution to treat a system as real. An unowned script is like an unmoderated forum thread: it exists, it accumulates, it becomes a locus for problems, and it produces incidents when nobody is accountable for its boundaries.

At the end, the memory returns to LambdaMOO because it is simpler than the modern world and therefore clearer. Wizards learned that execution is where control lives. They did not always like this fact. They argued about legitimacy. They worried about being seen as tyrants. They tried to distribute authority and still maintain system integrity. The vocabulary was messy, but the operational truth was stable: when participants can create executable artifacts inside a shared environment, governance becomes a survival function.

The present stakes are financial, legal, and infrastructural. Yet the shape is recognizably the same. User-generated software is the condition of software production under generative abundance when the cost of producing plausible candidates collapses and the cost of evaluation stays expensive. That condition pulls software into the same governance logic that institutions built for user-generated media because the same problem has returned in a new form: abundance plus externalities plus limited human review.